In a previous post we built ordered pairs and the Cartesian product — the tools that let us combine elements from two sets while respecting order. Now we take the most important step: using those pairs to describe connections between elements. That idea is exactly what we call binary relations.

Binary relations are everywhere: “is less than,” “is a sibling of,” “divides,” “is parallel to,” “belongs to.” All of these phrases connect two objects in a defined order. In this post we will formalize that concept and explore its fundamental properties.

Quick recap: ordered pairs and the Cartesian product

Before we begin, a brief reminder of the concepts from the previous post:

Ordered pair. An ordered pair \( (a, b) \) is a pairing of two elements where position counts — which comes first and which comes second. Two pairs are equal if and only if their corresponding components match:

\[ (a, b) = (c, d) \iff a = c \wedge b = d \]

Cartesian product. Given two sets \( A \) and \( B \), the Cartesian product \( A \times B \) is the set of all possible ordered pairs:

\[ A \times B = \{(a, b) \mid a \in A \wedge b \in B\} \]

The Cartesian product gives us the “universe of all combinations.” But in most real-world situations, not every pair is relevant — only those satisfying some condition or property. Selecting those pairs is exactly what a binary relation does.

Binary relation

Definition

A binary relation \( R \) from a set \( A \) to a set \( B \) is a subset of the Cartesian product \( A \times B \), made up of ordered pairs \( (a, b) \) satisfying a specific rule or condition:

\[ R \subseteq A \times B \]

In other words, out of all possible combinations in \( A \times B \), the relation \( R \) selects only those pairs that satisfy its defining condition.

In plain terms: The Cartesian product contains all possible combinations. The relation filters them, keeping only those that meet a given property.

Notation

If a pair \( (a, b) \) belongs to the relation \( R \), we write it in two equivalent ways:

| Notation | Read as |

|---|---|

| \( (a, b) \in R \) | “the pair \( (a, b) \) belongs to \( R \)” |

| \( aRb \) | “\( a \) is related to \( b \) under \( R \)” |

If the pair does not belong to the relation:

| Notation | Read as |

|---|---|

| \( (a, b) \notin R \) | “the pair \( (a, b) \) does not belong to \( R \)” |

| \( a\not{R}b \) | “\( a \) is not related to \( b \)” |

Connection with logic: For each pair \( (a, b) \in A \times B \), exactly one of two things holds: either \( aRb \) or \( a\not{R}b \). There is no middle ground — this is a direct application of the law of excluded middle from propositional logic.

Introductory example

Let \( A = \{1, 2, 3\} \) and \( B = \{x, y, z\} \). Define the relation:

\[ R = \{(1, y),\ (1, z),\ (3, y)\} \]

The Cartesian product \( A \times B \) has \( 3 \times 3 = 9 \) possible pairs. The relation \( R \) selects only 3 of them:

| \( A \times B \) | \( x \) | \( y \) | \( z \) |

|---|---|---|---|

| 1 | ✓ | ✓ | |

| 2 | |||

| 3 | ✓ |

Cells marked ✓ are pairs belonging to \( R \). Empty cells are pairs that exist in \( A \times B \) but were not selected.

Since \( R \subseteq A \times B \), \( R \) is a relation from \( A \) to \( B \). Under this relation:

- \( 1Ry \) ✓ — 1 is related to \( y \)

- \( 1Rz \) ✓ — 1 is related to \( z \)

- \( 3Ry \) ✓ — 3 is related to \( y \)

- \( 2\not{R}x \), \( 2\not{R}y \), \( 2\not{R}z \) — 2 is not related to any element of \( B \)

More examples

Example 1. Let \( A = \{1, 2, 3\} \) and \( B = \{2, 4, 5, 6\} \). Consider the following relations from \( A \) to \( B \):

\[ R_1 = \{(1, 4),\ (2, 5),\ (2, 6)\} \]

\[ \begin{align} R_2 & = \{(x, y) \in A \times B \mid x + y = 7\} \\ & = \{(1, 6),\ (2, 5),\ (3, 4)\} \end{align} \]

Notice that \( R_2 \) is defined by a condition (that the sum equal 7), and we check which pairs in \( A \times B \) satisfy it.

Example 2. Familiar relations on numerical sets:

| Relation | Sets | Example |

|---|---|---|

| “is less than” (\( < \)) | \( \mathbb{Z} \times \mathbb{Z} \) | \( 3 < 5 \) ✓, but \( 5 < 3 \) ✗ |

| “divides” (\( \mid \)) | \( \mathbb{N} \times \mathbb{N} \) | \( 3 \mid 12 \) ✓, but \( 5 \nmid 12 \) ✗ |

| “equals” (\( = \)) | Any set | \( 7 = 7 \) ✓ |

What does “divides” mean? We say \( a \) divides \( b \) (written \( a \mid b \)) when the division \( \frac{b}{a} \) yields an exact integer with no remainder. For example: \( 3 \mid 12 \) because \( \frac{12}{3} = 4 \in \mathbb{Z} \), but \( 5 \nmid 12 \) because \( \frac{12}{5} = 2.4 \notin \mathbb{Z} \).

Example 3. Geometric relations:

| Relation | Set | Property |

|---|---|---|

| “is parallel to” (\( \parallel \)) | Lines in the plane | \( L_1 \parallel L_2 \) |

| “is perpendicular to” (\( \perp \)) | Lines in the plane | \( L_1 \perp L_2 \) |

| “is congruent to” (\( \cong \)) | Triangles | \( \triangle_1 \cong \triangle_2 \) |

Example 4. Everyday relations:

- If \( A = \{\text{Peru, Ecuador, Colombia}\} \) and \( B = \{\text{Lima, Quito, Bogotá}\} \), the relation “is the capital of” gives:

\[ R = \{(\text{Peru, Lima}),\ (\text{Ecuador, Quito}),\ (\text{Colombia, Bogotá})\} \]

Homogeneous and heterogeneous relations

Relations fall into two categories depending on the sets involved:

Heterogeneous relation. When \( A \neq B \), the relation connects elements of two different sets:

\[ R \subseteq A \times B, \quad A \neq B \]

All the examples above involving distinct sets are heterogeneous.

Homogeneous relation (or relation on a set). When \( A = B \), the relation connects elements within the same set:

\[ R \subseteq A \times A = A^2 \]

In this case we say \( R \) is a relation on \( A \).

Why does this distinction matter? Homogeneous relations let us analyze the internal structure of a set: are there symmetries? Is there an ordering? Are some elements equivalent? The properties we will study shortly — reflexivity, symmetry, transitivity — only make sense for homogeneous relations.

Example. Let \( A = \{1, 2, 3, 4\} \) and define the relation “\( x \) is less than \( y \)”:

\[ \begin{align} R & = \{(x, y) \in A^2 \mid x < y\} \\ & = \{(1,2),\ (1,3),\ (1,4),\ (2,3),\ (2,4),\ (3,4)\} \end{align} \]

This is a homogeneous relation (on \( A \)) because both \( x \) and \( y \) come from the same set.

Special relations

Every set \( A \) has three special relations that are always defined:

| Relation | Definition | Description |

|---|---|---|

| Empty relation | \( R = \emptyset \) | No element is related to any other |

| Universal relation | \( R = A \times A \) | Every element is related to every other (including itself) |

| Identity relation (diagonal) | \( \Delta_A = \{(a, a) \mid a \in A\} \) | Each element is related only to itself |

Example of the identity relation. If \( A = \{1, 2, 3\} \):

\[ \Delta_A = \{(1, 1),\ (2, 2),\ (3, 3)\} \]

Connection. The identity relation \( \Delta_A \) is exactly the diagonal \( D(A) \) studied in the previous post on the Cartesian product. Each element is paired with itself.

How many relations exist on a set?

Since every relation on \( A \) is a subset of \( A^2 \), the number of possible relations equals the number of subsets of \( A^2 \).

Reminder. A set with \( m \) elements has exactly \( 2^m \) subsets (for details, see the post on power sets in set theory). Since \( A^2 \) has \( n^2 \) elements when \( n(A) = n \), we apply the formula with \( m = n^2 \):

If \( n(A) = n \), then there are \( 2^{n^2} \) relations on \( A \).

Example. If \( A = \{a, b\} \), then \( A^2 \) has \( 2^2 = 4 \) elements, and there are \( 2^4 = 16 \) possible relations on \( A \) — from the empty relation \( \emptyset \) to the universal relation \( A^2 \), and everything in between.

Explosive growth. A set with just 3 elements admits \( 2^9 = 512 \) possible relations. With 4 elements, that jumps to \( 2^{16} = 65{,}536 \). This shows the enormous structural richness that relations bring to even small sets.

Domain and range of a relation

When we define a relation \( R \) from \( A \) to \( B \), not every element of \( A \) necessarily takes part in the relation, nor does every element of \( B \) necessarily get reached. To describe this, we define two fundamental sets.

Source set and target set

Given a relation \( R: A \to B \) (that is, \( R \subseteq A \times B \)):

- \( A \) is called the source set (or initial set)

- \( B \) is called the target set (or final set, or codomain)

The first components of the pairs \( (a, b) \in R \) come from \( A \), and the second components come from \( B \).

Domain

The domain of a relation \( R \) from \( A \) to \( B \) is the set of all first components of the ordered pairs in \( R \):

\[ \text{Dom}(R) = \{x \in A \mid \exists y \in B,\ (x, y) \in R\} \]

That is, \( x \in \text{Dom}(R) \) if and only if there is at least one \( y \in B \) such that \( x \) is related to \( y \).

In plain terms: The domain is the set of elements of \( A \) that participate in the relation — those that appear as first components in at least one pair of \( R \).

Range

The range of a relation \( R \) from \( A \) to \( B \) is the set of all second components of the ordered pairs in \( R \):

\[ \text{Ran}(R) = \{y \in B \mid \exists x \in A,\ (x, y) \in R\} \]

That is, \( y \in \text{Ran}(R) \) if and only if there is at least one \( x \in A \) that is related to \( y \).

In plain terms: The range is the set of elements of \( B \) that are reached by the relation — those that appear as second components in at least one pair of \( R \).

Important note. The domain is always a subset of \( A \) and the range is always a subset of \( B \), but they need not equal \( A \) and \( B \):

\[ \text{Dom}(R) \subseteq A, \qquad \text{Ran}(R) \subseteq B \]

Example with finite sets

Let \( A = \{1, 2, 3\} \), \( B = \{x, y, z\} \), and the relation:

\[ R = \{(1, y),\ (1, z),\ (3, y)\} \]

From the diagram we can read off:

- \( \text{Dom}(R) = \{1, 3\} \) — the elements of \( A \) that send arrows

- \( \text{Ran}(R) = \{y, z\} \) — the elements of \( B \) that receive arrows

- The element \( 2 \in A \) sends no arrows: \( 2 \notin \text{Dom}(R) \)

- The element \( x \in B \) receives no arrows: \( x \notin \text{Ran}(R) \)

Example with an algebraic condition

Let \( A = \{1, 2, 5, 6\} \) and \( B = \{3, 5, 7, 9\} \). Define:

\[ R = \{(x, y) \in A \times B \mid x + y = 8\} \]

We check which combinations sum to 8:

| \( x \in A \) | \( y = 8 – x \) | \( y \in B \)? | Pair |

|---|---|---|---|

| 1 | 7 | ✓ | (1, 7) |

| 2 | 6 | ✗ | — |

| 5 | 3 | ✓ | (5, 3) |

| 6 | 2 | ✗ | — |

\[ R = \{(1, 7),\ (5, 3)\} \]

- \( \text{Dom}(R) = \{1, 5\} \)

- \( \text{Ran}(R) = \{3, 7\} \)

Example with infinite sets

Over the natural numbers, define:

\[ R = \{(x, y) \in \mathbb{N} \times \mathbb{N} \mid 3x – 2y = 6\} \]

Solving for \( y \): \( y = \frac{3x – 6}{2} \). For \( y \in \mathbb{N} \), we need \( x \) to be even and \( x > 2 \). So:

- \( x = 4 \implies y = 3 \)

- \( x = 6 \implies y = 6 \)

- \( x = 8 \implies y = 9 \)

- \( \ldots \)

- \( \text{Dom}(R) = \{4, 6, 8, 10, \ldots\} \)

- \( \text{Ran}(R) = \{3, 6, 9, 12, \ldots\} \)

Properties of domain and range

Let \( R \) and \( S \) be two relations from \( A \) to \( B \). The following properties hold:

| Property | Domain | Range |

|---|---|---|

| Union | \( \text{Dom}(R \cup S) = \text{Dom}(R) \cup \text{Dom}(S) \) | \( \text{Ran}(R \cup S) = \text{Ran}(R) \cup \text{Ran}(S) \) |

| Intersection | \( \text{Dom}(R \cap S) \subseteq \text{Dom}(R) \cap \text{Dom}(S) \) | \( \text{Ran}(R \cap S) \subseteq \text{Ran}(R) \cap \text{Ran}(S) \) |

| Difference | \( \text{Dom}(R) – \text{Dom}(S) \subseteq \text{Dom}(R – S) \) | \( \text{Ran}(R) – \text{Ran}(S) \subseteq \text{Ran}(R – S) \) |

Remark. For union, the equalities are exact (\( = \)). But for intersection and difference, only inclusion (\( \subseteq \)) holds — not necessarily equality. Two relations can share the same domain or range without sharing the same pairs.

Proof of the union property for the domain

\( \text{Dom}(R \cup S) = \text{Dom}(R) \cup \text{Dom}(S) \)

(a) \( \text{Dom}(R \cup S) \subseteq \text{Dom}(R) \cup \text{Dom}(S) \):

- Let \( x \in \text{Dom}(R \cup S) \) — hypothesis

- \( \implies \exists y \in B \) such that \( (x, y) \in R \cup S \) — def. of domain

- \( \implies [\exists y \in B,\ (x, y) \in R] \vee [\exists y \in B,\ (x, y) \in S] \) — def. of \( \cup \)

- \( \implies x \in \text{Dom}(R) \vee x \in \text{Dom}(S) \) — def. of domain

- \( \implies x \in \text{Dom}(R) \cup \text{Dom}(S) \) — def. of \( \cup \)

- \( \therefore \text{Dom}(R \cup S) \subseteq \text{Dom}(R) \cup \text{Dom}(S) \) — from (1) and (5)

(b) \( \text{Dom}(R) \cup \text{Dom}(S) \subseteq \text{Dom}(R \cup S) \):

- Let \( x \in \text{Dom}(R) \cup \text{Dom}(S) \) — hypothesis

- \( \implies x \in \text{Dom}(R) \vee x \in \text{Dom}(S) \) — def. of \( \cup \)

- \( \implies [\exists y \in B,\ (x, y) \in R] \vee [\exists y \in B,\ (x, y) \in S] \) — def. of domain

- \( \implies \exists y \in B,\ (x, y) \in R \cup S \) — def. of \( \cup \)

- \( \implies x \in \text{Dom}(R \cup S) \) — def. of domain

- \( \therefore \text{Dom}(R) \cup \text{Dom}(S) \subseteq \text{Dom}(R \cup S) \) — from (1) and (5)

From (a) and (b): \( \text{Dom}(R \cup S) = \text{Dom}(R) \cup \text{Dom}(S) \). ∎

Proof of the intersection property for the domain

\( \text{Dom}(R \cap S) \subseteq \text{Dom}(R) \cap \text{Dom}(S) \)

- Let \( x \in \text{Dom}(R \cap S) \) — hypothesis

- \( \implies \exists y \in B \) such that \( (x, y) \in R \cap S \) — def. of domain

- \( \implies [\exists y \in B,\ (x, y) \in R] \wedge [\exists y \in B,\ (x, y) \in S] \) — def. of \( \cap \)

- \( \implies x \in \text{Dom}(R) \wedge x \in \text{Dom}(S) \) — def. of domain

- \( \implies x \in \text{Dom}(R) \cap \text{Dom}(S) \) — def. of \( \cap \)

- \( \therefore \text{Dom}(R \cap S) \subseteq \text{Dom}(R) \cap \text{Dom}(S) \) — from (1) and (5) ∎

Why only \( \subseteq \) and not \( = \)? Because two relations can share the same element \( x \) in their domains while associating it with different elements \( y \). For instance, if \( R = \{(1, a)\} \) and \( S = \{(1, b)\} \), then \( \text{Dom}(R) \cap \text{Dom}(S) = \{1\} \), but \( R \cap S = \emptyset \), so \( \text{Dom}(R \cap S) = \emptyset \).

Note. The range properties are proved in exactly the same way, swapping “first component” for “second component” throughout.

Graphical representations of a relation

Binary relations can be visualized in several ways. Each representation highlights a different aspect and proves useful in different contexts.

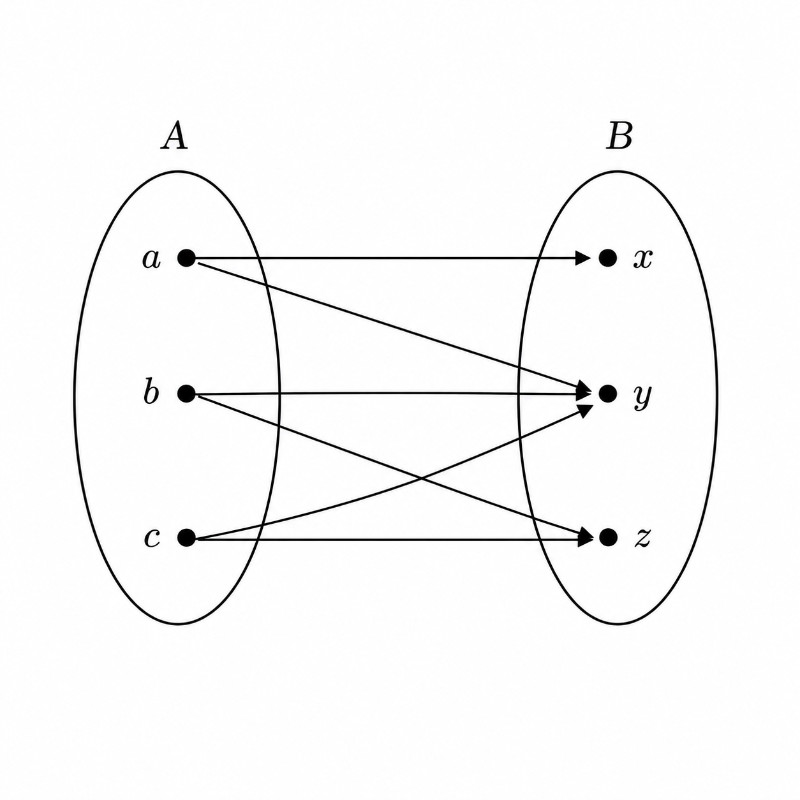

Arrow diagram (sagittal diagram)

Two ovals are drawn: one for the set \( A \) (source) and one for \( B \) (target). Arrows are then drawn from \( a \) to \( b \) for each pair \( (a, b) \in R \).

Example. Let \( A = \{1, 2, 3, 4\} \), \( B = \{a, b, c\} \), and the relation:

\[ R = \{(1, a),\ (1, c),\ (2, b),\ (3, a),\ (3, b)\} \]

Notice that:

- Element \( 4 \in A \) sends no arrows — it does not participate in the relation.

- Element \( c \in B \) receives only one arrow (from 1).

- A single element can send multiple arrows (like 1 and 3).

Advantage of the arrow diagram: You can immediately see the domain (who sends arrows), the range (who receives them), and the overall structure of the connections.

Relation matrix

A rectangular table is built with rows representing elements of \( A \) and columns representing elements of \( B \). Each cell holds a 1 if the pair belongs to the relation, or a 0 if it does not.

Using the same relation as above:

| \( R \) | a | b | c |

|---|---|---|---|

| 1 | 1 | 0 | 1 |

| 2 | 0 | 1 | 0 |

| 3 | 1 | 1 | 0 |

| 4 | 0 | 0 | 0 |

Row 1 reads 1, 0, 1 — meaning \( (1, a) \in R \) and \( (1, c) \in R \), but \( (1, b) \notin R \).

Advantage of the matrix: It is compact and exhaustive — it shows all possible combinations at once. It also prepares students for linear algebra (matrices and matrix operations).

Quick read: The domain corresponds to rows with at least one 1. The range corresponds to columns with at least one 1.

Directed graph (homogeneous relations)

When the relation is homogeneous (\( R \subseteq A \times A \)), a special representation is used: a directed graph. Elements are drawn as points (nodes) and an arrow is drawn from \( x \) to \( y \) whenever \( (x, y) \in R \).

Example. Let \( A = \{1, 2, 3, 4\} \) and the relation:

\[ R = \{(1, 2),\ (2, 2),\ (2, 4),\ (3, 2),\ (3, 4),\ (4, 1),\ (4, 3)\} \]

Notice the arrow from 2 to itself (the self-loop) — this indicates \( (2, 2) \in R \), meaning 2 is related to itself. Self-loops become important when we study the reflexive property.

Advantage of the directed graph: It reveals the structural properties of the relation — cycles, paths, self-loops. It is the standard representation in graph theory.

Cartesian plane representation

When \( A \) and \( B \) are subsets of the real numbers, the pairs \( (a, b) \in R \) are plotted as points in the Cartesian plane. For finite sets, these are isolated dots; for relations defined by equations or inequalities, the corresponding regions are graphed.

Example with a finite set. The relation \( R = \{(1, 2),\ (2, 2),\ (2, 4),\ (3, 2),\ (3, 4),\ (4, 1),\ (4, 3)\} \) on \( A = \{1, 2, 3, 4\} \):

Each orange dot represents a pair \( (x, y) \in R \). The dashed diagonal \( y = x \) serves as a reference: points on it would correspond to pairs \( (a, a) \) — the identity relation. Here, only \( (2, 2) \) lies on the diagonal.

Advantage of the Cartesian plane: Ideal for numerical relations, especially those defined by equations or inequalities (such as \( y \leq 2x + 1 \)). It connects directly to the graphing of functions, which we will explore in future posts.

Summary of representations

| Representation | Best for… | Prepares for… |

|---|---|---|

| Arrow diagram | Heterogeneous relations; seeing domain and range | Functions, domain/codomain |

| Relation matrix | Exhaustiveness checks; finite sets | Linear algebra, matrices |

| Directed graph | Homogeneous relations; spotting cycles and loops | Graph theory, networks |

| Cartesian plane | Numerical relations; equations/inequalities | Graphing functions, calculus |

Inverse relation

Definition

Given a relation \( R \) from \( A \) to \( B \), the inverse relation (or converse) of \( R \), written \( R^{-1} \), is the relation from \( B \) to \( A \) obtained by reversing the order of every pair:

\[ R^{-1} = \{(y, x) \in B \times A \mid (x, y) \in R\} \]

That is:

\[ (y, x) \in R^{-1} \iff (x, y) \in R \]

In plain terms: If the original relation \( R \) has an arrow from \( x \) to \( y \), the inverse \( R^{-1} \) has an arrow from \( y \) to \( x \). All the arrows are flipped.

Example

Let \( A = \{1, 2, 3\} \), \( B = \{x, y, z\} \), and the relation:

\[ R = \{(1, y),\ (1, z),\ (3, y)\} \]

Reversing each pair:

\[ R^{-1} = \{(y, 1),\ (z, 1),\ (y, 3)\} \]

Note that \( R \) is a relation from \( A \) to \( B \), while \( R^{-1} \) is a relation from \( B \) to \( A \) — the source and target sets swap.

Diagram of the original relation \( R: A \to B \):

Diagram of the inverse relation \( R^{-1}: B \to A \):

Domain and range of the inverse

Reversing the pairs swaps the roles of first and second components. Therefore:

\[ \text{Dom}(R^{-1}) = \text{Ran}(R) \] \[ \text{Ran}(R^{-1}) = \text{Dom}(R) \]

In the example above: \( \text{Dom}(R) = \{1, 3\} \) and \( \text{Ran}(R) = \{y, z\} \). Inverting: \( \text{Dom}(R^{-1}) = \{y, z\} \) and \( \text{Ran}(R^{-1}) = \{1, 3\} \). ✓

The inverse of the inverse

Inverting twice returns the original relation:

\[ (R^{-1})^{-1} = R \]

Why? If \( (x, y) \in R \), then \( (y, x) \in R^{-1} \), and inverting again gives \( (x, y) \in (R^{-1})^{-1} \). The pairs return to their original state.

Graphical symmetry

If \( R \) is a relation on \( \mathbb{R} \) (that is, \( R \subseteq \mathbb{R}^2 \)), the graph of \( R^{-1} \) in the Cartesian plane is the reflection of the graph of \( R \) across the line \( y = x \).

This holds because if the point \( (a, b) \) belongs to \( R \), the point \( (b, a) \) belongs to \( R^{-1} \) — and \( (b, a) \) is exactly the mirror image of \( (a, b) \) across the diagonal.

Example. If \( R = \{(x, y) \mid y = 3x + 2\} \), then:

\[ R^{-1} = \{(x, y) \mid x = 3y + 2\} = \left\{(x, y) \mid y = \frac{x – 2}{3}\right\} \]

Some pairs: \( (0, 2) \in R \iff (2, 0) \in R^{-1} \), \( (1, 5) \in R \iff (5, 1) \in R^{-1} \).

Graphically, both lines are symmetric about \( y = x \):

Properties of the inverse relation

Let \( R \subseteq A \times B \) and \( S \subseteq A \times B \) be two relations. The following hold:

| Property | Statement |

|---|---|

| Union | \( (R \cup S)^{-1} = R^{-1} \cup S^{-1} \) |

| Intersection | \( (R \cap S)^{-1} = R^{-1} \cap S^{-1} \) |

| Difference | \( (R – S)^{-1} = R^{-1} – S^{-1} \) |

Pattern: The inversion operation “distributes” over all set operations. This works because inverting pairs is an element-wise operation that applies cleanly to union, intersection, and difference alike.

Proof of the intersection property

\( (R \cap S)^{-1} = R^{-1} \cap S^{-1} \)

(a) \( (R \cap S)^{-1} \subseteq R^{-1} \cap S^{-1} \):

- Let \( (x, y) \in (R \cap S)^{-1} \) — hypothesis

- \( \implies (y, x) \in R \cap S \) — def. of inverse relation

- \( \implies (y, x) \in R \wedge (y, x) \in S \) — def. of \( \cap \)

- \( \implies (x, y) \in R^{-1} \wedge (x, y) \in S^{-1} \) — def. of inverse relation

- \( \implies (x, y) \in R^{-1} \cap S^{-1} \) — def. of \( \cap \)

- \( \therefore (R \cap S)^{-1} \subseteq R^{-1} \cap S^{-1} \) — from (1) and (5)

(b) \( R^{-1} \cap S^{-1} \subseteq (R \cap S)^{-1} \):

- Let \( (y, x) \in R^{-1} \cap S^{-1} \) — hypothesis

- \( \implies (y, x) \in R^{-1} \wedge (y, x) \in S^{-1} \) — def. of \( \cap \)

- \( \implies (x, y) \in R \wedge (x, y) \in S \) — def. of inverse relation

- \( \implies (x, y) \in R \cap S \) — def. of \( \cap \)

- \( \implies (y, x) \in (R \cap S)^{-1} \) — def. of inverse relation

- \( \therefore R^{-1} \cap S^{-1} \subseteq (R \cap S)^{-1} \) — from (1) and (5)

From (a) and (b): \( (R \cap S)^{-1} = R^{-1} \cap S^{-1} \). ∎

Note. The proofs for the union and difference properties follow exactly the same pattern: apply the definition of the inverse (reverse the pair), then the definition of the set operation, and reassemble.

Composition of relations

When two relations “chain together” — the first connecting \( A \) to \( B \) and the second connecting \( B \) to \( C \) — we can combine them into a single direct relation from \( A \) to \( C \). This operation is called composition.

Definition

Let \( R \) be a relation from \( A \) to \( B \) and \( S \) a relation from \( B \) to \( C \). The composition of \( R \) and \( S \), written \( S \circ R \), is the relation from \( A \) to \( C \) defined by:

\[ S \circ R = \{(x, z) \in A \times C \mid \exists y \in B,\ (x, y) \in R \wedge (y, z) \in S\} \]

That is:

\[ (x, z) \in S \circ R \iff \exists y \in B \text{ such that } (x, y) \in R \text{ and } (y, z) \in S \]

In plain terms: An element \( x \in A \) is related to \( z \in C \) under \( S \circ R \) if there is an “intermediary” \( y \in B \) acting as a bridge: \( x \) connects to \( y \) via \( R \), and \( y \) connects to \( z \) via \( S \).

Note on notation. \( S \circ R \) is read “\( S \) composed with \( R \)” or “\( S \) after \( R \).” \( R \) is applied first and \( S \) second, even though \( S \) appears on the left — this is the same convention used for function composition.

Diagram of composition

The composition \( S \circ R \) “jumps” directly from \( A \) to \( C \), using \( B \) as a stepping stone.

Existence condition. The composition \( S \circ R \) is nonempty if and only if \( \text{Ran}(R) \cap \text{Dom}(S) \neq \emptyset \). There must be at least one intermediate element \( y \) that is both “reached” by \( R \) and “used” by \( S \).

Example

Let \( A = \{1, 2, 3, 4\} \), \( B = \{a, b, c, d\} \), \( C = \{x, y, z\} \), and the relations:

\[ R = \{(1, a),\ (2, d),\ (3, a),\ (3, b),\ (3, d)\} \] \[ S = \{(b, x),\ (b, z),\ (c, y),\ (d, z)\} \]

To find \( S \circ R \), we look for the “bridges” in \( B \) connecting \( A \) to \( C \):

| Pair in \( R \) | Element of \( B \) | Pairs in \( S \) with that element | Pair in \( S \circ R \) |

|---|---|---|---|

| \( (1, a) \) | \( a \) | None | — |

| \( (2, d) \) | \( d \) | \( (d, z) \) | \( (2, z) \) |

| \( (3, a) \) | \( a \) | None | — |

| \( (3, b) \) | \( b \) | \( (b, x),\ (b, z) \) | \( (3, x),\ (3, z) \) |

| \( (3, d) \) | \( d \) | \( (d, z) \) | \( (3, z) \) |

\[ S \circ R = \{(2, z),\ (3, x),\ (3, z)\} \]

Notice that \( (3, z) \) arises from two different paths in the last two rows, but since this is a set, it is counted only once.

Non-commutativity

Composition of relations is not commutative in general:

\[ S \circ R \neq R \circ S \]

In fact, \( R \circ S \) may not even be defined if the sets don’t align (\( R \) requires its input to come from the range of \( S \)). And when both compositions exist, they typically produce different results.

Composition with itself

If \( R \) is a homogeneous relation (\( R \subseteq A \times A \)), composing \( R \) with itself is always well-defined. The standard notation is:

- \( R^2 = R \circ R \)

- \( R^3 = R^2 \circ R = R \circ R \circ R \)

- \( \ldots \)

In general, \( R^n = R^{n-1} \circ R \) for all \( n \geq 1 \).

Interpretation. \( (x, z) \in R^2 \) means there is a path of length 2 from \( x \) to \( z \) — that is, some intermediary \( y \) with \( xRy \) and \( yRz \). More generally, \( (x, z) \in R^n \) means there is a path of length \( n \) from \( x \) to \( z \).

Properties of composition

| Property | Statement |

|---|---|

| Associativity | \( (T \circ S) \circ R = T \circ (S \circ R) \) |

| Non-commutativity | \( S \circ R \neq R \circ S \) in general |

| Inverse of a composition | \( (S \circ R)^{-1} = R^{-1} \circ S^{-1} \) |

Note. The inverse of a composition reverses the order — just like getting dressed and undressed: you put on socks before shoes, but take off shoes before socks.

Proof of associativity

\( (T \circ S) \circ R = T \circ (S \circ R) \)

Let \( R \subseteq A \times B \), \( S \subseteq B \times C \), and \( T \subseteq C \times D \).

\( (x, u) \in (T \circ S) \circ R \)

\( \iff \exists y \in B \) such that \( (x, y) \in R \wedge (y, u) \in T \circ S \)

\( \iff \exists y \in B \) such that \( (x, y) \in R \wedge [\exists z \in C,\ (y, z) \in S \wedge (z, u) \in T] \)

\( \iff \exists y \in B,\ \exists z \in C \) such that \( (x, y) \in R \wedge (y, z) \in S \wedge (z, u) \in T \)

\( \iff \exists z \in C \) such that \( [\exists y \in B,\ (x, y) \in R \wedge (y, z) \in S] \wedge (z, u) \in T \)

\( \iff \exists z \in C \) such that \( (x, z) \in S \circ R \wedge (z, u) \in T \)

\( \iff (x, u) \in T \circ (S \circ R) \)

Since every step is a biconditional, the equality is proved. ∎

Properties of homogeneous relations

The properties studied below apply only to homogeneous relations — that is, relations \( R \subseteq A \times A \), where a single set plays the role of both source and target. These properties describe the internal structure of the relation and allow us to classify it.

Reflexive property

A relation \( R \) on \( A \) is reflexive if every element of \( A \) is related to itself:

\[ \forall a \in A: (a, a) \in R \]

Equivalently, \( R \) is reflexive if and only if the diagonal \( \Delta_A \) (see the section on special relations above) is contained in \( R \):

\[ R \text{ is reflexive} \iff \Delta_A \subseteq R \]

Visually: In the relation matrix, \( R \) is reflexive when the entire main diagonal consists of 1s.

Example (reflexive). Let \( A = \{2, 3, 4\} \) and \( R = \{(2,2),\ (2,3),\ (3,3),\ (4,4)\} \).

We check: \( (2,2) \in R \) ✓, \( (3,3) \in R \) ✓, \( (4,4) \in R \) ✓. Since all pairs \( (a,a) \) are present, \( R \) is reflexive.

Its relation matrix:

| 2 | 3 | 4 | |

|---|---|---|---|

| 2 | 1 | 1 | 0 |

| 3 | 0 | 1 | 0 |

| 4 | 0 | 0 | 1 |

The main diagonal (in bold) is all 1s — confirming reflexivity.

Example (not reflexive). Let \( A = \{1, 2, 3\} \) and \( R = \{(1,1),\ (1,2),\ (2,3)\} \).

Checking the diagonal: \( (1,1) \in R \) ✓, but \( (2,2) \notin R \) ✗. A single missing \( (a,a) \) pair is enough to make \( R \) not reflexive.

Caution: “Not reflexive” is not the same as “irreflexive” — a distinction we will draw shortly.

Classic examples of reflexive relations:

| Relation | Set | Reflexive? | Reason |

|---|---|---|---|

| \( \leq \) | \( \mathbb{Z} \) | ✓ | \( a \leq a \) for every \( a \) |

| \( \subseteq \) | Sets | ✓ | \( A \subseteq A \) for every \( A \) |

| \( \mid \) (divides) | \( \mathbb{N} \) | ✓ | \( n \mid n \) for every \( n \) |

| \( = \) (equality) | Any set | ✓ | \( a = a \) for every \( a \) |

Irreflexive property

A relation \( R \) on \( A \) is irreflexive if no element of \( A \) is related to itself:

\[ \forall a \in A: (a, a) \notin R \]

Equivalently:

\[ R \text{ is irreflexive} \iff R \cap \Delta_A = \emptyset \]

Visually: In the relation matrix, \( R \) is irreflexive when the entire main diagonal consists of 0s.

Example. Let \( A = \{a, b, c, d\} \) and \( R = \{(a, b),\ (b, c),\ (d, b)\} \).

No pair of the form \( (x, x) \) belongs to \( R \): \( (a,a) \notin R \), \( (b,b) \notin R \), \( (c,c) \notin R \), \( (d,d) \notin R \). Therefore \( R \) is irreflexive.

Its relation matrix:

| a | b | c | d | |

|---|---|---|---|---|

| a | 0 | 1 | 0 | 0 |

| b | 0 | 0 | 1 | 0 |

| c | 0 | 0 | 0 | 0 |

| d | 0 | 1 | 0 | 0 |

The main diagonal (in bold) is all 0s — confirming irreflexivity.

Classic examples:

| Relation | Set | Irreflexive? | Reason |

|---|---|---|---|

| \( < \) (strict less-than) | \( \mathbb{Z} \) | ✓ | \( a \not< a \) |

| \( \perp \) (perpendicular) | Lines | ✓ | No line is perpendicular to itself |

| \( \neq \) (inequality) | Any set | ✓ | \( a \neq a \) is false |

Reflexive, not reflexive, and irreflexive

These three concepts must be carefully distinguished:

| Concept | Condition on pairs \( (a,a) \) | Definition |

|---|---|---|

| Reflexive | Every \( (a,a) \in R \) | \( \forall a \in A: (a,a) \in R \) |

| Not reflexive | At least one \( (a,a) \notin R \) | \( \exists a \in A: (a,a) \notin R \) |

| Irreflexive | No \( (a,a) \in R \) | \( \forall a \in A: (a,a) \notin R \) |

The key: “Not reflexive” is simply the negation of reflexive — one missing \( (a,a) \) pair is enough. “Irreflexive” is a stronger condition: not a single \( (a,a) \) pair may appear in \( R \). Every irreflexive relation is not reflexive, but not every non-reflexive relation is irreflexive.

Comparative example. Let \( A = \{1, 2, 3\} \):

| Relation | \( (1,1) \) | \( (2,2) \) | \( (3,3) \) | Classification |

|---|---|---|---|---|

| \( R_1 = \{(1,1),\ (2,2),\ (3,3),\ (1,2)\} \) | ✓ | ✓ | ✓ | Reflexive |

| \( R_2 = \{(1,1),\ (1,2),\ (2,3)\} \) | ✓ | ✗ | ✗ | Not reflexive (but not irreflexive) |

| \( R_3 = \{(1,2),\ (2,3),\ (3,1)\} \) | ✗ | ✗ | ✗ | Irreflexive (and also not reflexive) |

Symmetric property

A relation \( R \) on \( A \) is symmetric if whenever \( a \) is related to \( b \), \( b \) is also related to \( a \):

\[ \forall a, b \in A: (a, b) \in R \implies (b, a) \in R \]

Equivalently:

\[ R \text{ is symmetric} \iff R = R^{-1} \]

Visually: In the relation matrix, \( R \) is symmetric when the matrix is symmetric about the diagonal — that is, \( M_{ij} = M_{ji} \) for all \( i, j \). (Here \( M_{ij} \) denotes the entry in row \( i \) and column \( j \).)

Example (symmetric). Let \( A = \{1, 2, 3, 4, 5\} \) and \( R = \{(1,1),\ (1,2),\ (2,1),\ (3,4),\ (4,3)\} \).

We check: \( (1,2) \in R \) and \( (2,1) \in R \) ✓; \( (3,4) \in R \) and \( (4,3) \in R \) ✓; \( (1,1) \) is its own mirror ✓. \( R \) is symmetric.

Its relation matrix:

| 1 | 2 | 3 | 4 | 5 | |

|---|---|---|---|---|---|

| 1 | 1 | 1 | 0 | 0 | 0 |

| 2 | 1 | 0 | 0 | 0 | 0 |

| 3 | 0 | 0 | 0 | 1 | 0 |

| 4 | 0 | 0 | 1 | 0 | 0 |

| 5 | 0 | 0 | 0 | 0 | 0 |

Every 1 off the diagonal has its mirror 1 on the other side — the matrix is a perfect reflection about the diagonal.

Example (not symmetric). Let \( A = \{1, 2, 3\} \) and \( R = \{(1,2),\ (3,2),\ (2,3)\} \).

We check: \( (3,2) \in R \) and \( (2,3) \in R \) ✓, but \( (1,2) \in R \) and \( (2,1) \notin R \) ✗. One pair without its mirror is enough to make \( R \) not symmetric.

Classic examples:

| Relation | Symmetric? | Reason |

|---|---|---|

| “is a sibling of” | ✓ | If \( x \) is a sibling of \( y \), then \( y \) is a sibling of \( x \) |

| \( \perp \) (perpendicular) | ✓ | If \( L_1 \perp L_2 \), then \( L_2 \perp L_1 \) |

| \( = \) (equality) | ✓ | If \( a = b \), then \( b = a \) |

| \( \leq \) | ✗ | \( 3 \leq 5 \), but \( 5 \not\leq 3 \) |

Antisymmetric property

A relation \( R \) on \( A \) is antisymmetric if whenever both \( a \) is related to \( b \) and \( b \) is related to \( a \), the two elements must be the same:

\[ \forall a, b \in A: [(a, b) \in R \wedge (b, a) \in R] \implies a = b \]

Equivalently: if \( a \neq b \) and \( (a, b) \in R \), then \( (b, a) \notin R \). There can be no “back-and-forth” arrows between distinct elements.

In terms of the inverse relation:

\[ R \text{ is antisymmetric} \iff R \cap R^{-1} \subseteq \Delta_A \]

Visually: In the relation matrix, if there is a 1 at position \( (i, j) \) with \( i \neq j \), then position \( (j, i) \) must be 0. The only 1s that can appear at mirror positions are those on the diagonal.

Example. Let \( A = \{a, b, c\} \) and \( R = \{(a,a),\ (a,b),\ (b,c),\ (b,b)\} \).

Computing: \( R^{-1} = \{(a,a),\ (b,a),\ (c,b),\ (b,b)\} \). \( R \cap R^{-1} = \{(a,a),\ (b,b)\} \subseteq \Delta_A \). Therefore \( R \) is antisymmetric. ✓

Its relation matrix:

| a | b | c | |

|---|---|---|---|

| a | 1 | 1 | 0 |

| b | 0 | 1 | 1 |

| c | 0 | 0 | 0 |

The 1s off the diagonal — at \( (a,b) \) and \( (b,c) \) — have 0s at their mirror positions \( (b,a) \) and \( (c,b) \). ✓

Classic examples:

| Relation | Antisymmetric? | Reason |

|---|---|---|

| \( \leq \) | ✓ | If \( a \leq b \) and \( b \leq a \), then \( a = b \) |

| \( \subseteq \) | ✓ | If \( A \subseteq B \) and \( B \subseteq A \), then \( A = B \) |

| \( \mid \) (divides) on \( \mathbb{N} \) | ✓ | If \( m \mid n \) and \( n \mid m \), then \( m = n \) |

| \( < \) | ✓ | \( a < b \) and \( b < a \) can never both hold |

Symmetric, not symmetric, and antisymmetric

Just as “not reflexive” is the negation of “reflexive” (and should not be confused with “irreflexive”), the same pattern applies here:

| Concept | Condition | Definition |

|---|---|---|

| Symmetric | If \( (a,b) \in R \), always \( (b,a) \in R \) | \( R = R^{-1} \) |

| Not symmetric | At least one pair where \( (a,b) \in R \) but \( (b,a) \notin R \) | \( R \neq R^{-1} \) |

| Antisymmetric | If \( (a,b) \in R \) and \( (b,a) \in R \), then \( a = b \) | \( R \cap R^{-1} \subseteq \Delta_A \) |

The key: “Not symmetric” is the negation of symmetric — a single pair without its mirror is enough. “Antisymmetric” is an independent concept: it requires that the only mirrored pairs be diagonal ones. A relation can be both symmetric and antisymmetric (if it contains only diagonal pairs), or neither.

Comparative example. Let \( A = \{1, 2, 3\} \):

| Relation | Classification |

|---|---|

| \( R_1 = \{(1,2),\ (2,1),\ (2,3),\ (3,2)\} \) | Symmetric (every pair has its mirror) |

| \( R_2 = \{(1,2),\ (2,3)\} \) | Not symmetric and antisymmetric |

| \( R_3 = \{(1,1),\ (2,2)\} \) | Symmetric and antisymmetric (diagonal only) |

| \( R_4 = \{(1,2),\ (2,1),\ (2,3)\} \) | Not symmetric and not antisymmetric |

Transitive property

A relation \( R \) on \( A \) is transitive if whenever \( a \) is related to \( b \) and \( b \) is related to \( c \), then \( a \) is also related to \( c \):

\[ \forall a, b, c \in A: [(a, b) \in R \wedge (b, c) \in R] \implies (a, c) \in R \]

Equivalently, in terms of composition:

\[ R \text{ is transitive} \iff R \circ R \subseteq R \]

Visually: In the relation matrix, \( R \) is transitive when for every pair of 1s at positions \( (i, j) \) and \( (j, k) \), there is also a 1 at position \( (i, k) \). In other words, \( R \circ R \) introduces no new pairs that are not already in \( R \).

Example (transitive). Let \( A = \{1, 2, 3\} \) and \( R = \{(1,1),\ (1,2),\ (1,3),\ (2,2),\ (2,3),\ (3,3)\} \).

Checking the chains:

- \( (1,1) \wedge (1,2) \implies (1,2) \) ✓

- \( (1,2) \wedge (2,3) \implies (1,3) \) ✓

- \( (2,2) \wedge (2,3) \implies (2,3) \) ✓

- All remaining combinations also check out.

\( R \) is transitive. ✓

Its relation matrix:

| 1 | 2 | 3 | |

|---|---|---|---|

| 1 | 1 | 1 | 1 |

| 2 | 0 | 1 | 1 |

| 3 | 0 | 0 | 1 |

Notice: this is the relation \( \leq \) on \( \{1,2,3\} \). The “upper-triangular” shape (all 1s on and above the diagonal, all 0s below) is characteristic of transitive order relations.

Example (not transitive). Let \( A = \{1, 2, 3, 4\} \) and:

\[ R = \{(1,1),\ (1,2),\ (2,2),\ (3,1),\ (4,1),\ (4,2),\ (4,3)\} \]

Checking the chains:

- \( (1,1) \wedge (1,2) \implies (1,2) \) ✓ (already in \( R \))

- \( (1,2) \wedge (2,2) \implies (1,2) \) ✓

- \( (3,1) \wedge (1,1) \implies (3,1) \) ✓

- \( (3,1) \wedge (1,2) \implies (3,2) \) ✗ — \( (3,2) \notin R \)

Since \( (3,2) \notin R \), the relation is not transitive.

Classic examples:

| Relation | Transitive? | Reason |

|---|---|---|

| \( \leq \) | ✓ | \( a \leq b \wedge b \leq c \implies a \leq c \) |

| \( \subseteq \) | ✓ | \( A \subseteq B \wedge B \subseteq C \implies A \subseteq C \) |

| \( \mid \) (divides) | ✓ | \( m \mid n \wedge n \mid k \implies m \mid k \) |

| \( \perp \) | ✗ | \( a \perp b \wedge b \perp c \) does not imply \( a \perp c \) |

| \( \parallel \) | ✗ | \( a \parallel b \wedge b \parallel c \) does not imply \( a \parallel c \) (they could be the same line) |

Intransitive property

A relation \( R \) on \( A \) is intransitive if the transitive chain never holds — whenever \( a \) is related to \( b \) and \( b \) to \( c \), then \( a \) is not related to \( c \):

\[ \forall a, b, c \in A: [(a, b) \in R \wedge (b, c) \in R] \implies (a, c) \notin R \]

Equivalently:

\[ R \text{ is intransitive} \iff R \circ R \cap R = \emptyset \]

Visually: In the relation matrix, \( R \) is intransitive when for every pair of 1s at positions \( (i, j) \) and \( (j, k) \), the position \( (i, k) \) is always 0.

Example (intransitive). Let \( A = \{1, 2, 3\} \) and \( R = \{(1, 2),\ (2, 3),\ (3, 1)\} \).

Checking all chains:

- \( (1,2) \wedge (2,3) \implies (1,3) \notin R \) ✓

- \( (2,3) \wedge (3,1) \implies (2,1) \notin R \) ✓

- \( (3,1) \wedge (1,2) \implies (3,2) \notin R \) ✓

No chain produces a pair that’s in \( R \). Therefore \( R \) is intransitive. ✓

Its relation matrix:

| 1 | 2 | 3 | |

|---|---|---|---|

| 1 | 0 | 1 | 0 |

| 2 | 0 | 0 | 1 |

| 3 | 1 | 0 | 0 |

This is a “cyclic” relation (1→2→3→1) where every 2-step chain lands on a 0.

Classic examples:

| Relation | Intransitive? | Reason |

|---|---|---|

| “is the parent of” | ✓ | If \( a \) is the parent of \( b \) and \( b \) of \( c \), then \( a \) is the grandparent of \( c \), not the parent |

| “beats” (in a round-robin tournament) | ✓ | If \( A \) beats \( B \) and \( B \) beats \( C \), \( A \) does not necessarily beat \( C \) in a direct match |

Transitive, not transitive, and intransitive

Following the same pattern as before:

| Concept | Condition on chains \( aRb \wedge bRc \) | Definition |

|---|---|---|

| Transitive | Always implies \( (a,c) \in R \) | \( R \circ R \subseteq R \) |

| Not transitive | At least one chain where \( (a,c) \notin R \) | \( R \circ R \not\subseteq R \) |

| Intransitive | Always implies \( (a,c) \notin R \) | \( R \circ R \cap R = \emptyset \) |

The key: “Not transitive” is the negation of transitive — one chain without a shortcut is enough. “Intransitive” is a stronger condition: no chain may have a shortcut. Every intransitive relation is not transitive, but not every non-transitive relation is intransitive.

Comparative example. Let \( A = \{1, 2, 3\} \):

| Relation | Classification |

|---|---|

| \( R_1 = \{(1,2),\ (2,3),\ (1,3)\} \) | Transitive (the chain 1→2→3 has its shortcut 1→3) |

| \( R_2 = \{(1,2),\ (2,3),\ (3,1)\} \) | Intransitive (no chain has a shortcut) |

| \( R_3 = \{(1,2),\ (2,3),\ (1,3),\ (3,1)\} \) | Not transitive (the chain 3→1→2 needs \( (3,2) \) but it’s missing), and not intransitive either (the chain 1→2→3 does have the shortcut 1→3) |

Connected (or total) property

A relation \( R \) on \( A \) is connected (or total) if for any two elements of \( A \), at least one is related to the other:

\[ \forall a, b \in A: (a, b) \in R \vee (b, a) \in R \]

In plain terms: Given any two elements, you can always compare them — there is no pair left incomparable. Think of a tournament where every participant has faced every other at least once.

Example (connected). The relation \( \leq \) on the integers is connected: given any two integers, say 3 and 7, we can always say \( 3 \leq 7 \). There are no two integers that can’t be compared with \( \leq \).

Example (not connected). Let \( A = \{1, 2, 3\} \) and \( R = \{(1,1),\ (1,2),\ (2,2),\ (3,3)\} \). What about elements 2 and 3? Neither \( (2,3) \in R \) nor \( (3,2) \in R \) — they are incomparable. Therefore \( R \) is not connected.

Summary of properties

| Property | Formal definition | Matrix interpretation |

|---|---|---|

| Reflexive | \( \forall a: (a,a) \in R \) | Entire main diagonal is 1 |

| Not reflexive | \( \exists a: (a,a) \notin R \) | At least one 0 on the diagonal |

| Irreflexive | \( \forall a: (a,a) \notin R \) | Entire main diagonal is 0 |

| Symmetric | \( (a,b) \in R \implies (b,a) \in R \) | \( M_{ij} = M_{ji} \) (mirror matrix) |

| Not symmetric | \( \exists (a,b) \in R: (b,a) \notin R \) | At least one mirrorless pair |

| Antisymmetric | \( (a,b) \in R \wedge (b,a) \in R \implies a = b \) | Off the diagonal, no mirrored 1s |

| Transitive | \( (a,b) \in R \wedge (b,c) \in R \implies (a,c) \in R \) | If \( M_{ij}=1 \) and \( M_{jk}=1 \), then \( M_{ik}=1 \) |

| Not transitive | \( \exists \) chain without shortcut | At least one chain with \( M_{ik}=0 \) |

| Intransitive | \( (a,b) \in R \wedge (b,c) \in R \implies (a,c) \notin R \) | If \( M_{ij}=1 \) and \( M_{jk}=1 \), then \( M_{ik}=0 \) |

| Connected | \( \forall a,b: (a,b) \in R \vee (b,a) \in R \) | Every pair of elements is comparable |

Classes of relations

Combining the properties above, we get special types of relations that appear throughout mathematics. Each class is defined by a specific combination of properties.

Overview

| Class | Reflexive | Symmetric | Antisymmetric | Transitive | Connected |

|---|---|---|---|---|---|

| Reflexive relation | ✓ | ||||

| Symmetric relation | ✓ | ||||

| Transitive relation | ✓ | ||||

| Dependency | ✓ | ✓ | |||

| Preorder | ✓ | ✓ | |||

| Equivalence | ✓ | ✓ | ✓ | ||

| Partial order | ✓ | ✓ | ✓ | ||

| Total order | ✓ | ✓ | ✓ | ✓ |

Reflexive relation

A relation \( R \) on \( A \) is a reflexive relation if it satisfies only the reflexive property:

\[ \forall a \in A: (a,a) \in R \]

This is the most elementary class — it only requires each element to be related to itself. It places no restrictions on how distinct elements relate to one another.

Example. Let \( A = \{a, b, c, d\} \) and:

\[ R = \{(a,a),\ (a,b),\ (b,b),\ (b,c),\ (c,c),\ (d,b),\ (d,d)\} \]

The pairs \( (a,a),\ (b,b),\ (c,c),\ (d,d) \) are all present, so \( R \) is reflexive. The additional pairs \( (a,b),\ (b,c),\ (d,b) \) do not affect reflexivity — they may or may not be present.

Why does it matter? Reflexive relations are the starting point for all more elaborate classes. Notice in the table above that preorders, equivalences, and orders all require reflexivity. Adding further properties on top of a reflexive relation is how those classes are built.

Symmetric relation

A relation \( R \) on \( A \) is a symmetric relation if it satisfies only the symmetric property:

\[ \forall a, b \in A: (a,b) \in R \implies (b,a) \in R \]

Equivalently: \( R = R^{-1} \). If a pair belongs to the relation, its mirror does too.

Example. Let \( A = \{1, 2, 3\} \) and:

\[ R = \{(1,2),\ (2,1),\ (2,3),\ (3,2)\} \]

Each pair has its mirror: \( (1,2) \leftrightarrow (2,1) \) and \( (2,3) \leftrightarrow (3,2) \). So \( R \) is symmetric.

Note. A symmetric relation by itself does not require reflexivity or transitivity. When it is also reflexive and transitive, it becomes an equivalence relation.

Transitive relation

A relation \( R \) on \( A \) is a transitive relation if it satisfies only the transitive property:

\[ \forall a, b, c \in A: [(a,b) \in R \wedge (b,c) \in R] \implies (a,c) \in R \]

Equivalently: \( R \circ R \subseteq R \). Every 2-step chain has a direct shortcut.

Example. Let \( A = \{1, 2, 3\} \) and:

\[ R = \{(1,2),\ (2,3),\ (1,3)\} \]

The only chain is \( (1,2) \wedge (2,3) \), and the shortcut \( (1,3) \) is present. So \( R \) is transitive.

Note. A transitive relation by itself does not require reflexivity or symmetry. When it is also reflexive, it becomes a preorder.

Dependency relation

A relation \( R \) on \( A \) is a dependency relation (also called a tolerance relation) if it is reflexive and symmetric:

\[ \begin{cases} \forall a \in A: & (a,a) \in R \\\ \forall a,b \in A: & (a,b) \in R \implies (b,a) \in R \end{cases} \]

Each element is related to itself, and whenever \( a \) depends on \( b \), \( b \) depends on \( a \). Transitivity is not required.

Example. Let \( A = \{1, 2, 3, 4\} \) and:

\[ R = \{(1,1),\ (2,2),\ (3,3),\ (4,4),\ (1,2),\ (2,1),\ (2,3),\ (3,2)\} \]

We verify:

- Reflexive: \( (1,1),\ (2,2),\ (3,3),\ (4,4) \in R \) ✓

- Symmetric: \( (1,2) \leftrightarrow (2,1) \) ✓ and \( (2,3) \leftrightarrow (3,2) \) ✓

- Transitive? \( (1,2) \wedge (2,3) \implies (1,3) \), but \( (1,3) \notin R \) ✗

So \( R \) is a dependency relation (reflexive and symmetric), but not an equivalence relation since transitivity fails.

Connection. Adding the transitive property to a dependency relation turns it into an equivalence relation.

Preorder

A relation \( R \) on \( A \) is a preorder if it is reflexive and transitive:

\[ \begin{cases} \forall a \in A: & (a,a) \in R \\\ \forall a,b,c \in A: & [(a,b) \in R \wedge (b,c) \in R] \implies (a,c) \in R \end{cases} \]

The preorder is the most general class we will study — it only asks that each element be related to itself and that the relation can be “chained.”

Example. On the integers \( \mathbb{Z} \), define \( R \) by “\( x \) divides \( y \)” (with \( x \neq 0 \)). This relation is reflexive (\( n \mid n \)) and transitive (\( m \mid n \wedge n \mid k \implies m \mid k \)), so it is a preorder.

Note. A preorder that is also symmetric is an equivalence relation, and a preorder that is also antisymmetric is a partial order.

Equivalence relation

A relation \( R \) on \( A \) is an equivalence relation if it is reflexive, symmetric, and transitive:

\[ \begin{cases} \text{Reflexive:} & \forall a \in A:\ (a,a) \in R \\\ \text{Symmetric:} & \forall a,b \in A:\ (a,b) \in R \implies (b,a) \in R \\\ \text{Transitive:} & \forall a,b,c \in A:\ [(a,b) \in R \wedge (b,c) \in R] \implies (a,c) \in R \end{cases} \]

Intuition. An equivalence relation groups the elements of \( A \) into “families” of elements that are considered “equal” under some criterion. Within each family, every element is related to every other.

Example 1. Let \( A = \{1, 3, 5\} \) and \( R = \{(1,1),\ (3,3),\ (5,5),\ (1,3),\ (3,1)\} \).

- Reflexive: \( (1,1),\ (3,3),\ (5,5) \in R \) ✓

- Symmetric: \( (1,3) \in R \) and \( (3,1) \in R \) ✓

- Transitive: \( (1,3) \wedge (3,1) \implies (1,1) \) ✓; \( (3,1) \wedge (1,3) \implies (3,3) \) ✓

\( R \) is an equivalence relation. ✓

Example 2. Equality \( = \) is the most basic equivalence relation:

- \( a = a \) ✓ (reflexive)

- \( a = b \implies b = a \) ✓ (symmetric)

- \( a = b \wedge b = c \implies a = c \) ✓ (transitive)

Example 3. Modular congruence. On the integers, the relation “\( a \) is congruent to \( b \) modulo \( n \)” (i.e., \( n \mid (a – b) \)) is an equivalence relation:

\[ a \equiv b \pmod{n} \iff n \mid (a – b) \]

- Reflexive: \( a – a = 0 \) and \( n \mid 0 \) ✓

- Symmetric: if \( n \mid (a-b) \), then \( n \mid (b-a) \) ✓

- Transitive: if \( n \mid (a-b) \) and \( n \mid (b-c) \), adding gives \( n \mid (a-c) \) ✓

Example 4. Other familiar equivalence relations:

| Relation | Set | Criterion for “sameness” |

|---|---|---|

| Parallelism (\( \parallel \)) | Lines in the plane | Same direction |

| Congruence (\( \cong \)) | Geometric figures | Same shape and size |

| Set equality | Sets | Same elements |

| Parity | \( \mathbb{Z} \) | Same remainder when divided by 2 |

Coming up. Equivalence relations have far-reaching consequences: they divide \( A \) into disjoint subsets called equivalence classes, which in turn form a partition of \( A \). We will develop these ideas in detail in the next post.

Partial order relation

A relation \( R \) on \( A \) is a partial order if it is reflexive, antisymmetric, and transitive:

\[ \begin{cases} \text{Reflexive:} & \forall a \in A:\ (a,a) \in R \\\ \text{Antisymmetric:} & \forall a,b \in A:\ [(a,b) \in R \wedge (b,a) \in R] \implies a = b \\\ \text{Transitive:} & \forall a,b,c \in A:\ [(a,b) \in R \wedge (b,c) \in R] \implies (a,c) \in R \end{cases} \]

The pair \( (A, R) \) is called a partially ordered set (or poset).

It is called “partial” because not all elements need to be comparable — there may be pairs \( a, b \) where neither \( aRb \) nor \( bRa \) holds.

Key difference from equivalence: Equivalence groups “equal” elements (symmetric). Order ranks them in a hierarchy (antisymmetric). Both require reflexivity and transitivity, but differ in their third property.

Example 1. The relation \( \leq \) on \( \mathbb{Z} \):

- Reflexive: \( a \leq a \) ✓

- Antisymmetric: \( a \leq b \wedge b \leq a \implies a = b \) ✓

- Transitive: \( a \leq b \wedge b \leq c \implies a \leq c \) ✓

\( (\mathbb{Z}, \leq) \) is a poset.

Example 2. The relation \( \subseteq \) on the power set \( \mathcal{P}(A) \):

- Reflexive: \( X \subseteq X \) ✓

- Antisymmetric: \( X \subseteq Y \wedge Y \subseteq X \implies X = Y \) ✓

- Transitive: \( X \subseteq Y \wedge Y \subseteq Z \implies X \subseteq Z \) ✓

\( (\mathcal{P}(A), \subseteq) \) is a poset. But it is not a total order, because some sets are incomparable: \( \{a\} \not\subseteq \{b\} \) and \( \{b\} \not\subseteq \{a\} \).

Total order relation

A relation \( R \) on \( A \) is a total order if it is a partial order that is also connected:

\[ \text{Total order} = \text{Partial order} + \text{Connectedness} \]

That is, it is reflexive, antisymmetric, transitive, and any two elements are comparable:

\[ \forall a, b \in A: (a, b) \in R \vee (b, a) \in R \]

The pair \( (A, R) \) is called a totally ordered set (or chain).

Example. \( (\mathbb{Z}, \leq) \) is a total order: given any two integers, we can always compare them with \( \leq \).

Counterexample. \( (\mathcal{P}(A), \subseteq) \) is a partial order but not a total order: there exist incomparable sets.

Note. A total order is simply a partial order “with no gaps”: no two elements escape comparison. All real numbers under \( \leq \) form a total order, while sets under \( \subseteq \) form only a partial order.

Comparative summary

| Class | Properties | Classic example | Effect on \( A \) |

|---|---|---|---|

| Reflexive relation | Reflexive | Equality (\( = \)) | Each element is related to itself |

| Symmetric relation | Symmetric | “is a sibling of” | Pairs always come in mirrors |

| Transitive relation | Transitive | \( \leq \) | Chains have shortcuts |

| Dependency | Reflexive + Symmetric | “is a neighbor of” | Mutual relation without chaining |

| Preorder | Reflexive + Transitive | Divisibility on \( \mathbb{Z} \) | A “precedence” relation |

| Equivalence | Reflexive + Symmetric + Transitive | Congruence mod \( n \) | Groups into classes |

| Partial order | Reflexive + Antisymmetric + Transitive | \( \subseteq \) on \( \mathcal{P}(A) \) | Ranks (partially) |

| Total order | Partial order + Connected | \( \leq \) on \( \mathbb{R} \) | Ranks (completely) |

Correspondences: the bridge to functions

We have studied homogeneous relations and their properties. But heterogeneous relations — that is, relations \( R \subseteq A \times B \) with \( A \neq B \) — also have their own classification, based on how the arrows are distributed between the two sets.

The four conditions

Given a relation \( R: A \to B \), four independent conditions are analyzed:

| Condition | Name | Description |

|---|---|---|

| e.i. | Existence of image | Every element of \( A \) has at least one image in \( B \) |

| u.i. | Uniqueness of image | Every element of \( A \) has at most one image in \( B \) |

| e.o. | Existence of origin | Every element of \( B \) has at least one origin in \( A \) |

| u.o. | Uniqueness of origin | Every element of \( B \) has at most one origin in \( A \) |

Formally:

\[ \text{e.i.} \quad \forall a \in A,\ \exists b \in B: (a, b) \in R \] \[ \text{u.i.} \quad \forall a \in A: [(a, b_1) \in R \wedge (a, b_2) \in R] \implies b_1 = b_2 \] \[ \text{e.o.} \quad \forall b \in B,\ \exists a \in A: (a, b) \in R \] \[ \text{u.o.} \quad \forall b \in B: [(a_1, b) \in R \wedge (a_2, b) \in R] \implies a_1 = a_2 \]

A note on terminology. In a pair \( (a, b) \in R \), the origin is the first component \( a \) and the image is the second component \( b \). This parallels the domain and range of homogeneous relations: the image conditions (e.i. and u.i.) concern the source set \( A \), while the origin conditions (e.o. and u.o.) concern the target set \( B \).

Types of correspondence

The combination of these conditions defines distinct types:

| Type | e.i. | u.i. | e.o. | u.o. | Description |

|---|---|---|---|---|---|

| Correspondence | Any heterogeneous relation | ||||

| Function (mapping) | ✓ | ✓ | Each element of \( A \) has exactly one image | ||

| Injective | ✓ | ✓ | ✓ | Function where distinct origins give distinct images | |

| Surjective | ✓ | ✓ | ✓ | Function where every element of \( B \) is reached | |

| Bijective | ✓ | ✓ | ✓ | ✓ | Both injective and surjective |

Important note. The most relevant type for this course is the function (or mapping), which combines existence and uniqueness of image: each \( a \in A \) corresponds to exactly one \( b \in B \). Functions are arguably the most important concept in all of modern mathematics.

Coming up. We will not develop correspondences and functions here — they deserve their own dedicated post, where we will study them in depth: formal definition, complete classification (injective, surjective, bijective), function composition, inverse functions, and much more.

Chapter summary

We started from the Cartesian product — the universe of all combinations — and defined binary relations as the subsets that “filter” the interesting connections. Throughout this post:

- We defined binary relations and their notation (\( aRb \), \( (a,b) \in R \))

- We established the domain and range as the “active” sets of the relation

- We visualized relations using arrow diagrams, matrices, directed graphs, and the Cartesian plane

- We built the inverse relation \( R^{-1} \) and the composition \( S \circ R \)

- We analyzed the fundamental properties: reflexive, irreflexive, symmetric, antisymmetric, transitive, intransitive, and connected — distinguishing in each case the property, its negation, and its strict version

- We classified relations into 8 classes: reflexive, symmetric, transitive, dependency, preorder, equivalence, partial order, and total order

- We introduced correspondences as a bridge toward functions

With these tools, we have the complete foundation needed to study equivalence relations in depth and, from there, functions.

What’s coming next

With binary relations under our belt, we are ready to tackle the following topics in upcoming posts:

- Equivalence relations and partitions — we will go deeper into how equivalence relations divide a set into disjoint classes, the fundamental theorem connecting equivalences with partitions, and detailed examples such as modular arithmetic and the quotient set.

- Mathematical correspondences — we will develop in full the four conditions (existence/uniqueness of image and origin) introduced here, with complete examples and arrow diagrams for each type of correspondence.

- Functions — we will study functions as a special type of correspondence, with their complete classification (injective, surjective, bijective), composition, inverses, and applications.

See you in the next post!